What is Search Engine Optimization in eCommerce:

There’s no chance you’ll ever appear in the SERPs if your site can’t be found (Search Engine Results Page). Welcome to your SEO learning journey, here you will learn SEO in 7 steps, let’s start. Search engines are answer machines. In order to provide the most appropriate answers for the questions searchers are asking, they exist to find, interpret, and organize the material of the internet.

It’s arguably the most critical piece of the SEO puzzle: there’s no chance you’ll ever appear in the SERPs if your site can’t be found (Search Engine Results Page).

- How do search engines work?

- What is search engine crawling?

- What is a search engine index?

- Search engine ranking

Crawling: Can search engines find your pages?

What is Search Engine Optimization?

Search engine optimization is the process of generating targeted traffic to a website from a search engine’s organic results (SEO).

Search engines have become ingrained in our daily routines. We use them for nearly everything, including studying, shopping, entertainment, and business, thanks to the ever-evolving area of e-Commerce.

What is Search Engine explain?

A search engine is a program that uses keywords or phrases to assist users to discover the information they’re looking for on the internet. By continuously monitoring the Internet and indexing every page they encounter, search engines are able to deliver results quickly—even with millions of websites online.

What is Search Engine in eCommerce?

For eCommerce websites, search engine optimization (SEO) is a must. It makes no difference how big or little your company is; when done effectively, SEO eCommerce methods can propel your website to the top of the search results, and your website pages will deliver the finest answers to a user’s search queries.

How Do Search Engines Work?

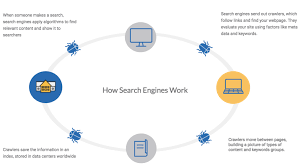

There are three main features of Search Engines:

Crawl: Scour the internet for information, and search for each URL they find in the code/content.

Index: During the crawling process, store and organize the content found. When a page is in the index, it is in the running phase to show the related queries as a result.

Rank: Include the pieces of content that best address the question of a searcher, indicating that the results are ordered from most relevant to least relevant.

What is Search Engine Crawling?

Crawling is the process of exploration in which a team of robots (known as crawlers or spiders) is sent out by search engines to find new and modified content. Content can differ, such as a website, an image, a video, a PDF, etc., but the content is found by links regardless of the format.

Crawling in search engine: The Google bot begins by retrieving some web pages and then follows the links to find new URLs on those web pages. Following this link, the crawler can find new content and add it to its index called Caffeine – an extensive database of discovered URLs – which can be retrieved later when a search Good match for the searcher looking for information that the content contained in this URL is one.

What is Search Engine Indexing?

The process of adding web pages to Google search is known as indexing. Google will crawl and index your website according to the meta tags you’ve included (index or NO-index).

Search engines process and store data that they find in an index, a vast archive of all the information that they have found and consider good enough to support searchers.

What is Search Engine Ranking:

Search engines scour their database for highly appropriate information when someone conducts a search and then orders the content in hopes of addressing the question of the searcher. This ordering of search results by importance is referred to as ranking. Generally speaking, you can conclude that the higher a website is ranked, the more important the search engine feels the question is to the domain.

Search engine crawlers may be partially or entirely blocked from your site, or search engines may be instructed to avoid saving certain pages in their list. While there may be reasons to do so, if you want your content to be found by searchers, you must first make sure it is accessible to crawlers and indexing. Otherwise, it is as good as hidden.

What is Google Search Engine Ranking & How do check Google Search?

Free Tips for SEO learners: Simply enter your Google search word ranking into the upper Omnibox if you’re using Google Chrome (where the URL is located). or Use Moz free Keyword Position Checker to see where your keywords rank

in Google. Simply type the domain name, keywords, and search engine into the blue ‘Check Position’ box and click the blue ‘Check Position’ button.

A conventional ranking algorithm would give a higher score to a page that had all of the query’s keywords and a lower ranking to one that only contained a portion of them.

Crawling: Can Search Engines find your Pages?

Keep an eye out for Site Visibility. “Allow search engines to index this site” should be selected. The site will now be indexed by Google and other search engines, making it searchable. It could take up to 6 weeks for search engines to crawl your site again and find new information.

Robots.txt:

Defining URL parameters in GSC

Can crawlers find all your important content?

Common navigation mistakes that can keep crawlers from seeing all of your sites.

If you already have a website, it would be nice to see how many pages you have in the index. This will give you some great insight into whether Google is crawling and searching for as many pages as you want, and nothing you don’t want.

The best way to validate your indexed pages is “site:yourdomain.com”, an advanced search operator. Head to Google and type “site:yourdomain.com” into the search bar. This will return results Google has in its index for the site specified:

Google doesn’t show the exact result (see “About XX results” above), however, it gives you a solid understanding of which pages are indexed on your web and how they appear in search results at the moment.

Monitor and use the index coverage report in Google, Google Search Console for more accurate results. If you do not currently have one, you can sign up for a free Google Search Console account.

If you don’t turn up in the search results somewhere, there are a few potential explanations why:

- Your website is brand new and hasn’t been operated yet.

- There are no links to your site from any external websites.

- The navigation on your web makes it hard for a robot to efficiently crawl it.

- Your page contains some simple code that blocks search engines, called crawler directives.

- Your domain has been penalized for spam tactics by Google.

Most people worry about making sure Google can locate their important sites, but it’s easy to forget that there are definitely pages you don’t want Googlebot to find.

This might include items like old URLs that have thin content, duplicate URLs (such as sort-and-filter parameters for e-commerce), special promo code pages, staging or test pages, and so on.

Use robots.txt to steer Googlebot away from certain pages and parts of your web.

Robots.txt:

Robots.txt files are stored in the root website directory (e.g. yourdomain.com/robots.txt) and show which parts of your website search engines can and should not crawl through unique robots.txt instructions, as well as the speed at which they crawl through your website.

How Googlebot treats robots.txt files:

- If Googlebot is unable to find a file with robots.txt for a site, the site will crawl.

- If a robots.txt file for a site is found by Googlebot, it will normally comply with the recommendations and continue to crawl the site.

- If Googlebot finds an error while attempting to access the robots.txt file of a site and is unable to decide whether or not one exists, the site will not crawl.

Not all web robots follow robots.txt. Malicious people (e.g., email address scrapers) create bots that do not follow this protocol. In reality, robots.txt files are used by certain bad actors to find where your private content has been stored.

Although it may seem logical to block crawlers from private pages such as login and admin pages so that they do not appear in the index, place the location of these URLs in a publicly accessible robot.txt file. It also means that people with bad intentions can find them more easily.

These pages are better for NoIndex and gate them behind a login form instead of putting them in your robots.txt file.

Defining URL parameters in GSC:

Some sites (most common with eCommerce) make the same content available to several different URLs by adding some parameters to the URLs.

If you’ve ever shopped online, you’ve limited your search by filters. For example, you can search for “shoes” on Amazon, and then refine your search by size, color, and style. Each time you refine, the URL changes slightly:

URL parameters javas: URL parameter values can be parsed by Javascript. You can accomplish this by using URL parameters.

How does Google understand which version of the URL should be used by searchers?

Google does a pretty good job of finding out the representative URL on its own, but you can use the Google Search Console URL Parameters function to tell Google exactly how you want your pages to be viewed by them.

If you use this feature to tell Google Bitcoin to say “No URLs with the ____ parameter,” then you must be asking to hide this content from the Google bot, resulting in That may result in the removal of pages from the search results. That’s what you want if duplicate pages are created with those criteria, but not ideal if you want to index those pages.

Can crawlers find all your important content?

- Is your content hidden behind login forms?

- Are you relying on search forms?

- Is text hidden within non-text content?

- Can search engines follow your site navigation?

Let’s learn about the optimizations now that you know some strategies to ensure that search engine crawlers stay away from your unimportant material.

Often, by crawling, a search engine would be able to locate parts of your web, but for one reason or another, other pages or sections may be obscured. It’s important to make sure that all the content you want to be indexed is found by search engines and not just your homepage.

Ask yourself this: Can the bot crawl through and not just to your website?

Is your content hidden behind login forms?

If you require users to log in, fill out forms, or respond to surveys before you can access certain content, search engines will not be able to view those protected pages. A crawler is definitely not logged in.

Are you relying on search forms?

Search types can’t be used by robots. Some people claim that search engines would be able to locate what their users are looking for if they put a search box on their site.

Is text hidden within non-text content?

To view text that you want to be indexed, non-text media types (images, video, GIFs, etc.) should not be used. While search engines are getting better at recognizing images, there is no guarantee that they will still be able to read and comprehend them. Inside the <HTML> markup of your website, it is always best to add text.

Can Search Engines follow your site Navigation?

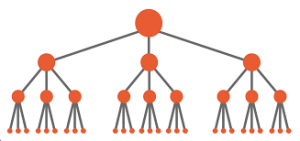

Just as a crawler needs to discover your site through links from other sites, to direct it from page to page, it needs a path of links on your own site. If you have a website you want to locate search engines, but from some other sites it is not connected to it, it is as good as invisible.

Common navigation mistakes that can keep crawlers from seeing all of your sites:

- Do you have clean information architecture?

- Are you utilizing sitemaps?

- Are crawlers getting errors when they try to access your URLs?

Many sites make the critical mistake of configuring navigation in ways that are inaccessible to search engines and hinder their ability to appear in search results.

- Getting smartphone navigation that displays different outcomes than navigating your desktop

- Any type of navigation where menu items are not present in HTML, such as navigation powered by JavaScript. Google has improved a lot in crawling and understanding JavaScript, but it’s still not a good practice. One of the surest ways to ensure that Google finds, understands, and organizes something is to put it in HTML.

- Personalization, or displaying specific visitor navigation to a particular form of visitor over others, might seem to mask a search engine crawler.

- Forgetting to connect through your navigation to a primary page on your website, note, that links are the paths crawlers follow to new sites!

This is why it is important for your website to have simple navigation and helpful frameworks for URL folders.

Do you have clean information architecture?

In order to increase productivity and findability for users, information architecture is the process of arranging and marking content on a website. The best design of knowledge is intuitive, which means users do not have to think too hard to flow through your website or find something.

Are you utilizing sitemaps?

Just what it sounds like is a sitemap: a list of URLs on your site that can be used by crawlers to find and index your content. One of the easiest ways to ensure Google is to find your top priority pages, create a file that meets Google’s standards and submit it through the Google Search Console. While presenting a sitemap doesn’t change the need for good site navigation, it can certainly help you navigate through all your important pages.

You will still be able to get it indexed by uploading your XML sitemap to the Google Search Console if your site doesn’t have any other sites linking to it. There is no guarantee that they will include in their index a submitted URL, but it’s worth a try!

Are crawlers getting errors when they try to access your URLs?

4xx Codes: If search engine crawlers are unable to navigate your content due to a client mistake.

5xx Codes: When search engine crawlers can’t access your content due to a server error

A crawler may encounter errors in the process of crawling the URLs on your web. You can go to the Google Search Console’s “Crawl Errors” report to find the URL on which this could happen.

– In this report, you will see server errors and no errors. Server log files can also show you this, as well as other information such as treasury frequency treasures. This is a more advanced tactic, we will not discuss it.

4xx Codes: If search engine crawlers are unable to navigate your content due to a client mistake

4xx errors are client errors, which means that the requested URL contains poor or unsatisfactory syntax. These might occur, just to name a few instances, because of a URL typo, deleted link, or broken redirect. When a 404 is reached by search engines, they cannot access the URL. They can get annoyed and quit when users hit a 404.

5xx Codes: When search engine crawlers can’t access your content due to a server error

5xx errors are server errors, which means that the server failed to fulfill the request to access the page or search engine on the page on which it is located. There is a tab devoted to these errors in the Search Console’s ‘Crawl Error’ report. This is usually because the URL request timed out, so Googlebot abandoned the request.

Fortunately, the 301 (permanent) redirect has a way to inform both searchers and search engines that the page has relocated.

If a page ranks for a query and you 301 it to a URL with different content, it could drop in the rank position because there is no longer the content that made it applicable to that specific query.

You also have the option of redirecting a page to 302, but this should be reserved for temporary moves and in instances where it is not as necessary to transfer connection equity. The 302s are kind of like a detour from a lane.

Once you’ve made sure your site is optimized for viability, the next business order is to make sure it can be indexed.

Most Popular SEO Blogs:

- Welcome to your SEO learning journey | Basic to Advance

- SEO-friendly blog post – 10 Tips for Writing

- Off-Page SEO: What It Is and Why It’s Important

- ON-PAGE SEO – Learn SEO in 7 steps | Basic to Advance

- What is a Search Engine? | Welcome to your SEO learning journey

- Keyword Research: How to Target and Prioritize keywords

- KEYWORD RESEARCH | Learn SEO in 7 Steps

- 7 Tips for keyword research in SEO – Things to Know

- WordPress SEO Tips | How to Boost Rankings

- Link building – How to build high-quality backlinks

- TRACKING SEO PERFORMANCE | Lean SEO in 7 steps